BSN, RN, CCRN

Wound care software series: Woond! The smart 🕶️ for hands-free wound care (Part 2)

Wound care software series: Woond! The smart 🕶️ for hands-free wound care (Part 2)

Part 2 of the Wound Care software series. If you haven't read Part 1, start there.

TLDR on Part 1: Every company on that map built the same product → nurse picks up a device, points it at a wound, captures something, and documents it. Twelve companies with twelve years of investment into one form factor. However, innovation in the wound care market does not limit itself there.

The history of smart glasses + wound care management

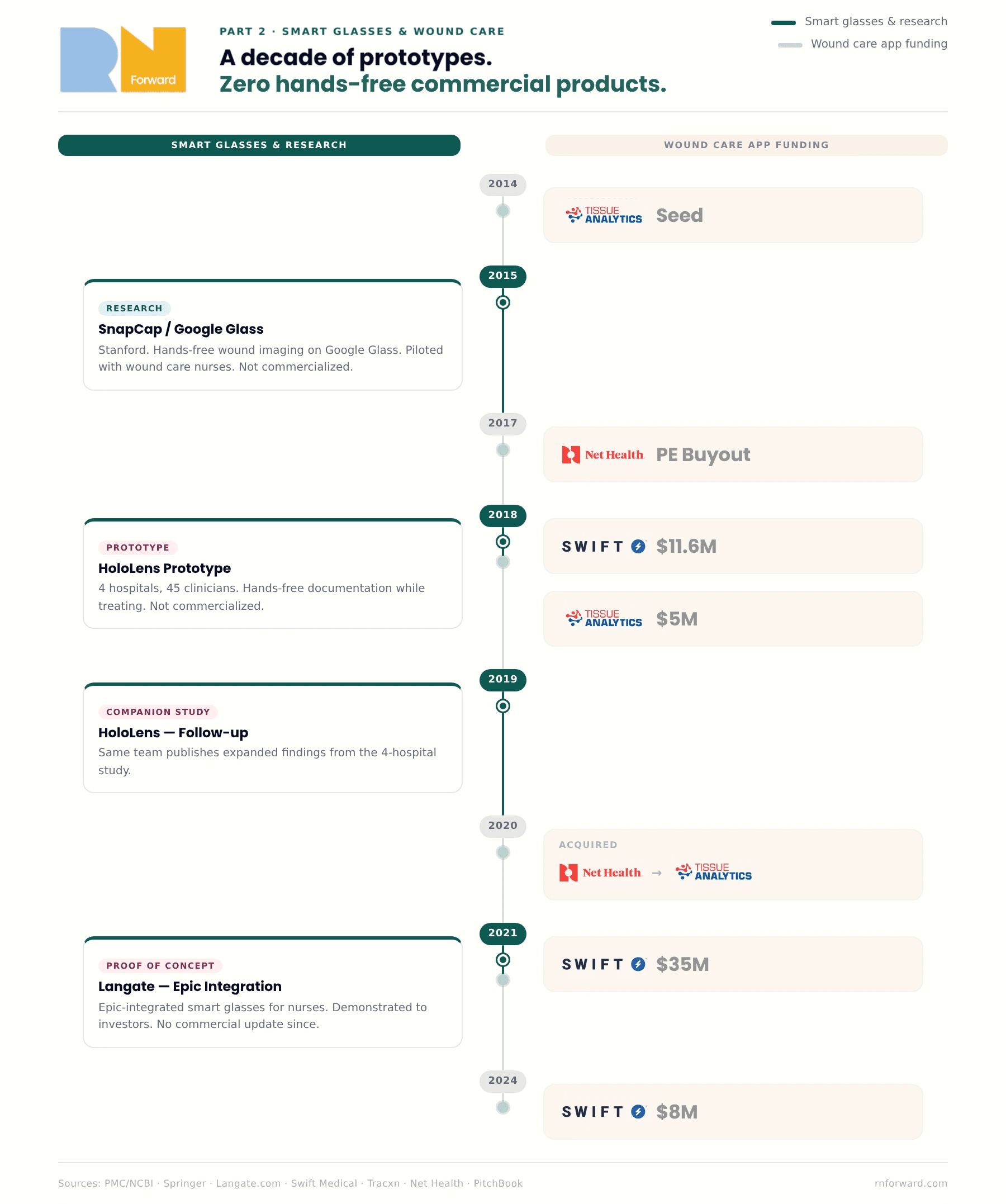

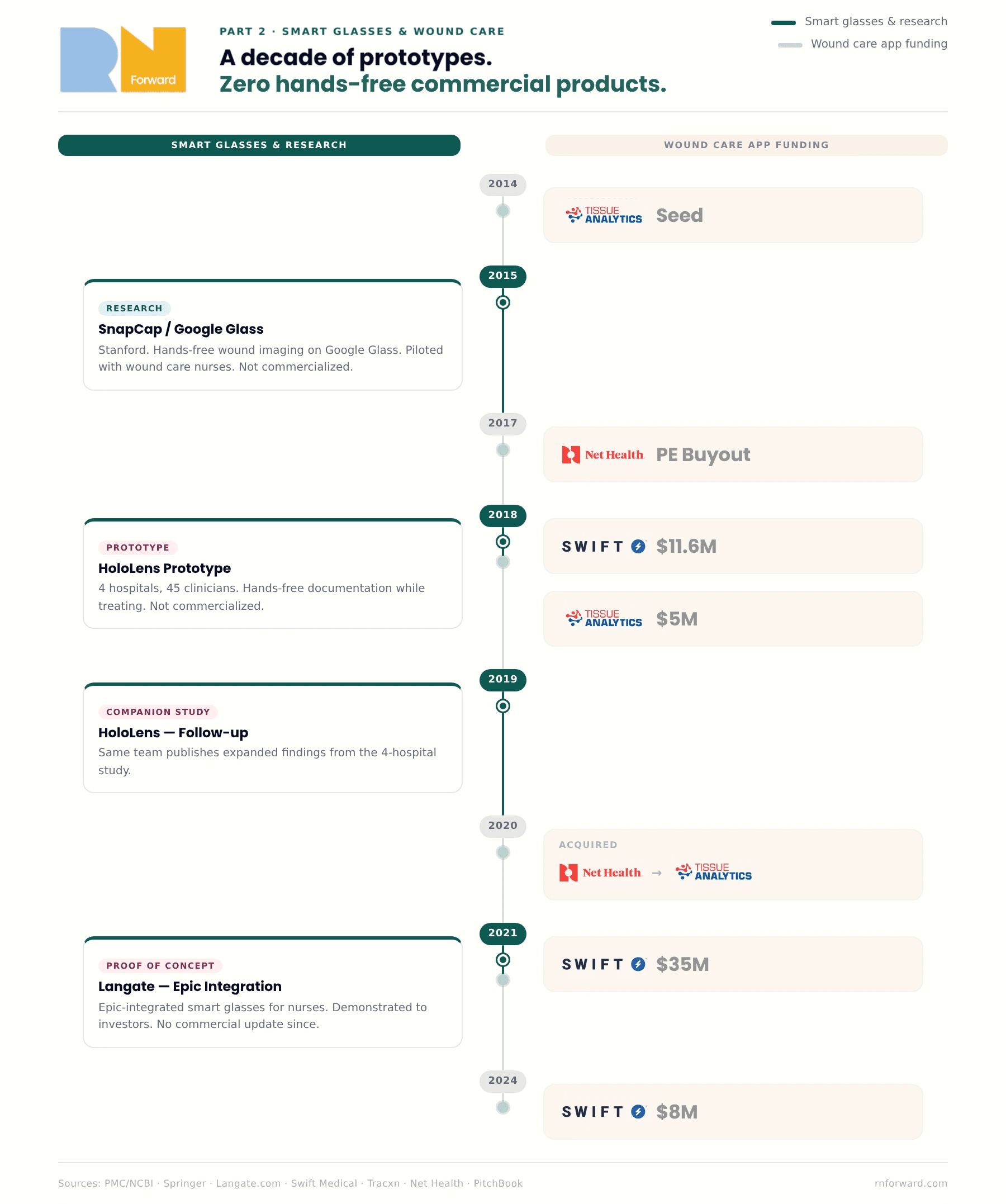

In 2015, a research team at Stanford built something different called SnapCap. SnapCap ran on Google Glass. The idea was exactly what it sounds like: a hands-free system that let wound care nurses photograph wounds, tag the images with patient data, and push everything directly into the medical record, all without touching a phone. They piloted it with actual wound care nurses at Stanford Hospital. It worked and nurses liked it but no one commercialized it.

A few years later, in 2018 and 2019, a team in Germany built a smart glass wound documentation prototype on Microsoft HoloLens. They ran it through a proper study: 45 healthcare workers with wound management experience, four hospitals, ethnographic fieldwork, the whole thing. What they found was that smart glasses let clinicians document wounds hands-free while they were actively treating them, which is the thing every wound care app has never managed to do. The nurses in the study preferred it. (Here's the companion paper from the same research group if you want to go deeper.)

Still, nobody shipped it.

Then in 2021, a software development company called Langate built an Epic-integrated smart glasses proof of concept designed specifically for nurses at the request of one of their clients (Langate is a software dev company). The idea was not wound care centered but instead pitched hands-free access to ALL patient data at the bedside, no phone required. They got it working and showed it to investors but then have not updated the masses on the product since.

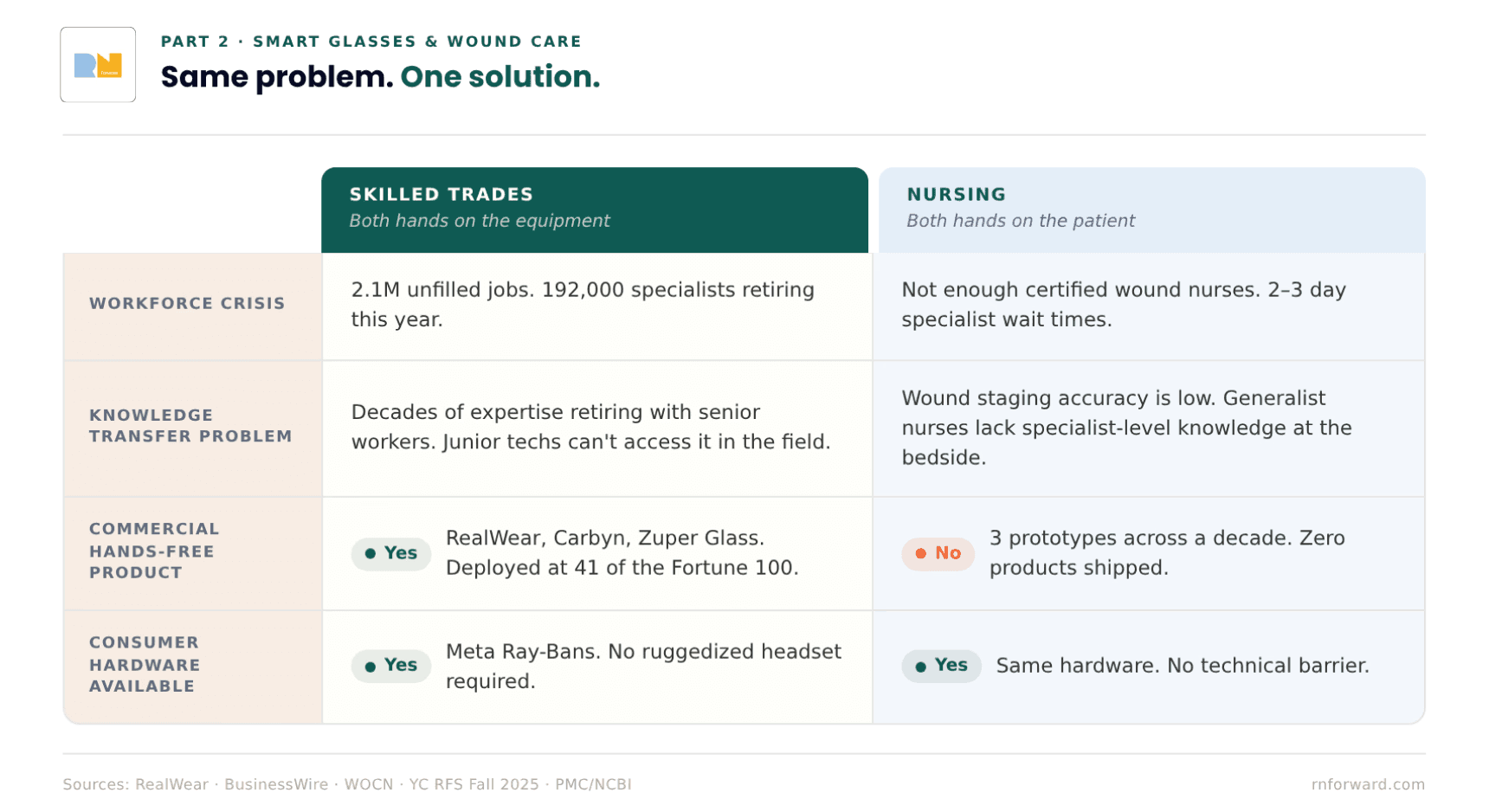

Three separate teams across a decade pointed at the same gap and confirmed it was technically feasible but have zero commercial products for it. Meanwhile the wound care apps from Part 1 were being funded and built during this entire window. The idea was proven and since then, the hardware has goten better.

Meanwhile, the skilled trades industry has figured it out 🏗️

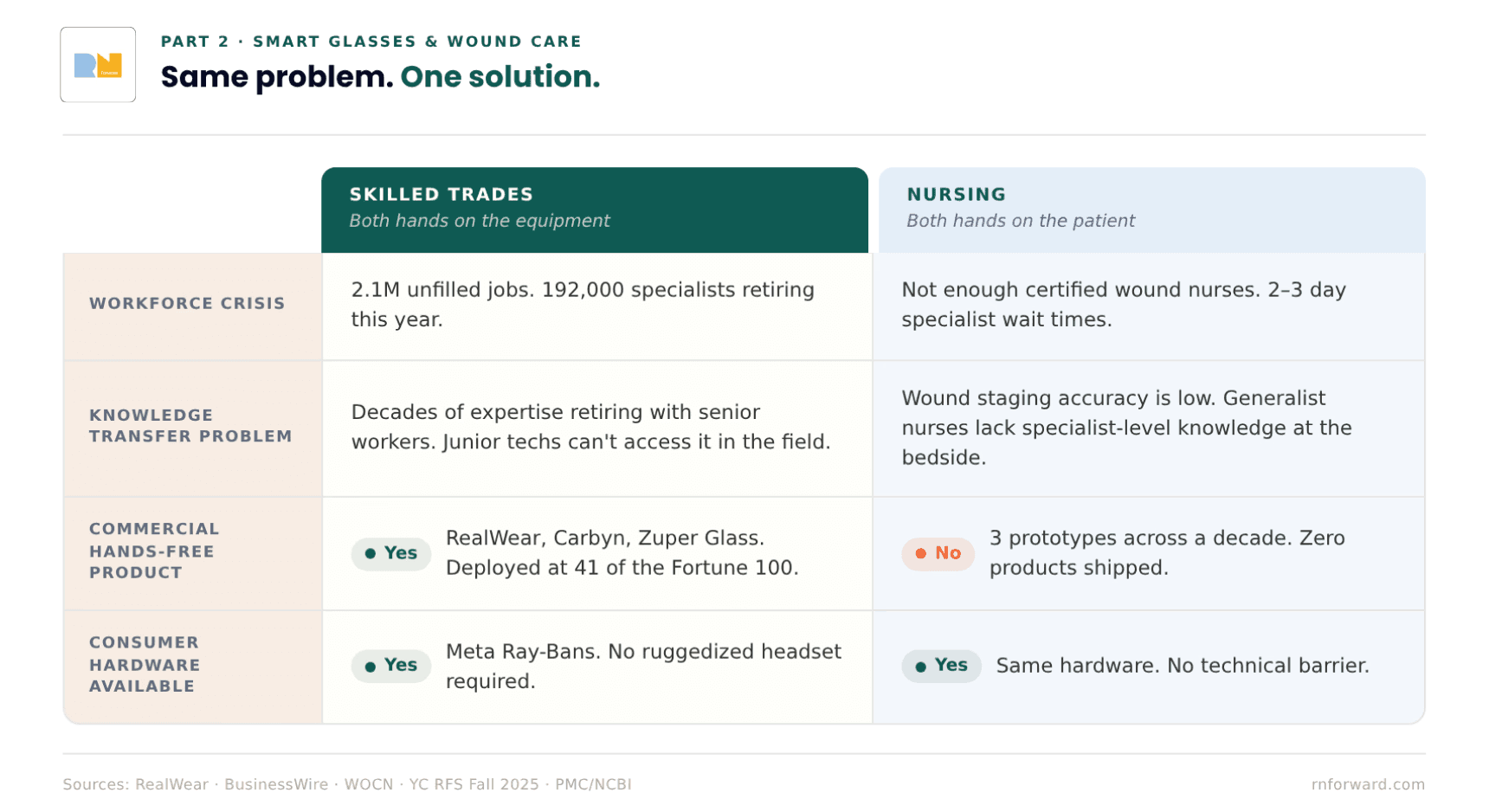

The skilled trades have a version of the same problem. An HVAC technician troubleshooting a rooftop unit at 100 degrees has both hands on the equipment. They can't hold their phone and look up fault codes at the same time. A master electrician with 30 years of knowledge is about to retire, and nobody has figured out how to get that knowledge out of their head and into a form that a junior tech can access on the job without calling them.

Sounds familiar doesn't it. 😬

Companies like RealWear and Vuzix have been building smart glasses for industrial frontline workers since the mid-2010s, and both have gone deep. RealWear ships devices that are ATEX-certified for use in explosive environments, has a thermal camera module, and has recently added AI-assisted onboarding and knowledge retention to its platform. Vuzix has been in the enterprise AR space just as long. These aren't proof of concepts sitting in a lab somewhere. They're deployed at Shell, BMW, Colgate-Palmolive, and 41 of the Fortune 100. The more recent wave, Carbyn and Zuper Glass, runs on consumer hardware like Meta Ray-Bans rather than ruggedized industrial headsets, which changes the adoption equation considerably for any setting where you can't show up looking like you're about to enter a refinery.

The reason the trades were able to get here is that the problem was clear and the incentive was real. Skilled trades are facing a workforce crisis: 2.1 million unfilled jobs, 192,000 workers retiring this year, decades of expertise walking out the door and not accessible to the next generation coming in. Smart glasses gave them a way to capture that knowledge and get it in front of junior workers in the field, hands-free, in real time.

The nursing version of this is: 1) not enough certified wound nurses, 2) patients waiting two to three days to be seen by a specialist, and 3) staging accuracy low enough that the data on it is genuinely concerning. They have the same structural problem and a hand-free solution while nurses today are still using a phone app.

Y Combinator's Fall 2025 Request for Startups called explicitly for AI guidance systems for physical workers using smart glasses, and named healthcare alongside HVAC and manufacturing. The market is converging. The hardware exists. The problem is understood. Nursing just wasn't the first vertical that got built for. What would it even look like when it actually ships for nursing? Where does it go from there? That's Part 3. *engagement seed planted* 🌱

I went to a hackathon and we tried to build the thing

Out-Of-Pocket ran their Hardware Edition hackathon in San Francisco in April 2026. It was one of my highlights of 2025 so I had to participate again this year. Our team had five people: two software engineers (one of them a two-time exited founder), a physical therapist turned healthcare executive, a healthcare operator with an MPH background, and me, a critical care nurse who brought the problem.

The problem I pitched the team was inpatient wound care assessment, specifically the version of it that doesn't make it into any app demo (an elbow wound). The cases I was thinking/complaining about are sacral pressure injuries on 300-pound incontinent patients with folds and necrotic tissue. Wounds where you can see fat. Wounds where you can see bone. Cases where you, a floor nurse, have to figure out what to do while repositioning a patient, managing lines, maintaining a clean field, and doing the math on whether to call the wound nurse now or wait until morning. That is the case nobody is building for, and it's the most common hard case in inpatient nursing.

The staging accuracy data makes this more concrete. According to an ACDIS clinical Q&A, wound care nurses at one hospital found that staff nurses correctly identified pressure injuries only about 60 percent of the time. An NCBI patient safety reference reports that fewer than half of nurses with under 20 years of experience felt confident consistently identifying all stages of pressure ulcers, and only about 30 percent of nurses with more than 20 years of experience did. That last number is the counterintuitive one: confidence actually goes down with experience, probably because experienced nurses know enough to understand how hard accurate staging actually is.

If you can't stage it, you can't treat it accurately right? That's the whole pickle.

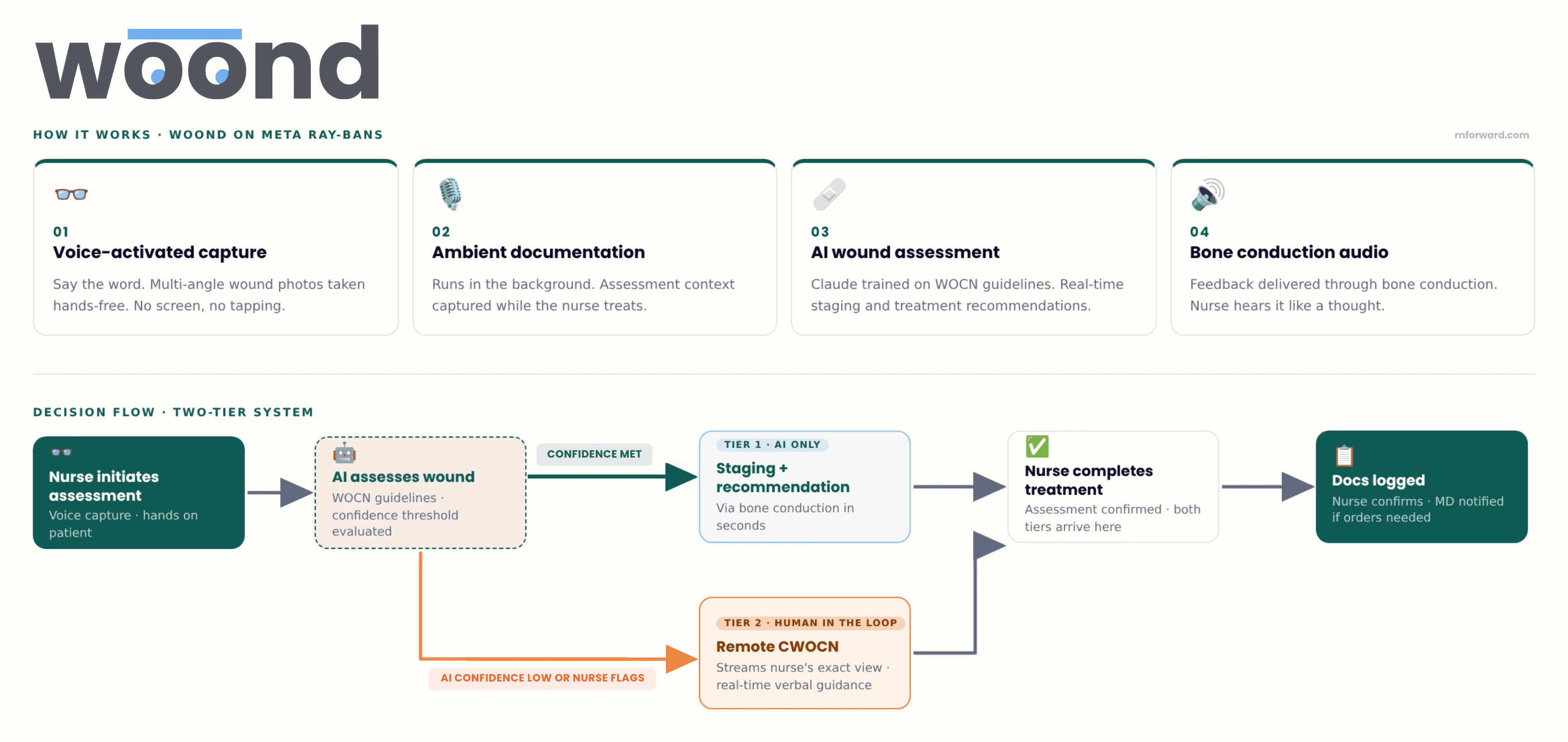

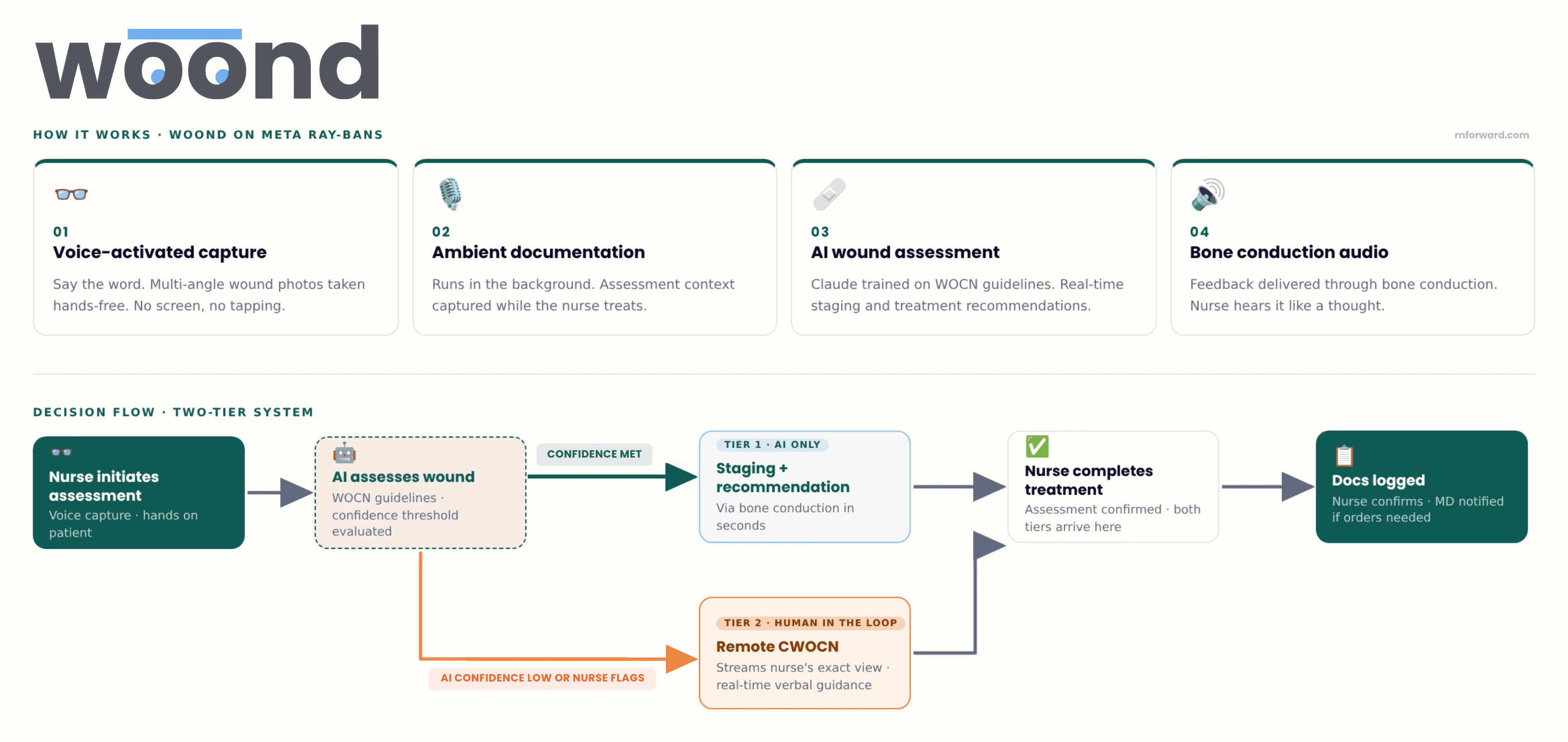

We built Woond, a hands-free, AI-assisted wound assessment system on modded Meta Ray-Bans with 1) voice-activated photo capture from multiple angles, 2) ambient documentation running in the background, 3) Claude trained on wound care best practices from WOCN guidelines to generate real-time staging assessments and treatment recommendations through bone conduction audio. All so the nurse hears feedback like a thought, with no screen and no dirty-hand-clean-hand-phone-pickup problem.

The implementation works in two tiers. Tier 1 is AI-only: you take a picture, wait a couple of seconds, and it tells you the stage and what to do. That's the default. Tier 2 is for the cases where the AI's confidence drops below a set threshold, where it gives you the option to escalate to a remote CWOCN who can stream into exactly what you're seeing and give you real-time verbal guidance. No more waiting 2 to 3 days for a specialist to come IRL. One remote specialist could theoretically serve multiple hospitals from a single location. Whether that gets staffed internally by a hospital system or licensed out to a third party is an operational question for whoever takes this further.

We demoed it on an elbow, which is the least representative wound I could have chosen relative to what we were actually designing for, but we had limited presentation time so concessions had to be made. But it worked. I took a picture, the AI identified the stage, and gave me treatment guidance through the glasses speaker, hands-free, while my hands stayed on the patient.

What didn't work perfectly? The Bluetooth connection couldn't handle everything we were asking the glasses to do simultaneously: recording/chart audio intake, listening for voice commands, streaming to Claude, generating a treatment plan, and communicating the treatment plan. We had to factory reset between practice runs. The glasses also don't have LIDAR for depth measurement or infrared for temperature sensing, both of which would be a benefit clinically for staging deep tissue injuries and identifying infection.

While this was a hackathon proof of concept, held together in the way all hackathon builds are held together (with tape and prayers), it proved what it was trying to prove: the form factor works, the AI can stage and recommend treatment in real time, and the whole thing fits on your face.

People who hear about this build often ask about the broader augmented reality and mixed reality hardware landscape. Google Glass, Microsoft HoloLens, Apple Vision Pro. If we're already putting clinical AI on glasses for wound care, why not go further? Why not always-on AR for charting, vital signs, alerts, everything? It's a fair question and a genuinely different problem with different barriers that we'll get into in Part 3. The short version for now: what we built is task-specific. On for the wound assessment, off when you're done. That's a much lower bar than asking a nurse to wear a computing device for a 12-hour shift, and the lower bar is exactly why wound care is the right place to start.

Hospitals should pay for this because it's a revenue and liability problem

It wouldn't be very RN Forward thinking of me not to talk about the financial incentives around this product. 🤑

The CMS reimbursement rules create a very specific financial dynamic around pressure injuries. Stage III and IV hospital-acquired pressure injuries are classified as "never events". CMS will not reimburse hospitals for treating them, and hospitals absorb the full cost plus face additional penalties. A single stage IV pressure ulcer costs an average of $129,248 per admission to treat. For a 100-bed hospital, the annual cost burden from pressure injuries can exceed $1.8 million. There are more than 17,000 pressure ulcer-related lawsuits filed every year in the US, second only to wrongful death litigation.

The other side of that equation, a wound identified and accurately documented on admission can be billed for treatment. Once the wound is in the chart with the right stage and the documentation supports it was present before the hospital created it, the billing window opens. Debridement (CPT 97597), wound care consults, dressing supplies, prescription agents like Santyl (an enzymatic debridement medication that runs several hundred dollars per tube). All of that becomes billable instead of absorbed.

Early, accurate identification by the bedside nurse does two things at once: 1) it starts the billing clock for treatment and 2) it creates the documentation that separates a community-acquired wound from a hospital-acquired liability. Two to three days of missed identification isn't just suboptimal care. It's two to three days of unbillable treatment and a growing exposure to a never-event penalty if the wound progresses to stage III or IV.

The phone-based apps from Part 1 technically address this problem. Better imaging means better staging, which means cleaner documentation, which means cleaner claims. But if the nurse can't easily use the app because she literally can't put down what she's doing, the documentation still doesn't happen, making the form factor problem also a revenue problem.

Part 2 of the Wound Care software series. If you haven't read Part 1, start there.

TLDR on Part 1: Every company on that map built the same product → nurse picks up a device, points it at a wound, captures something, and documents it. Twelve companies with twelve years of investment into one form factor. However, innovation in the wound care market does not limit itself there.

The history of smart glasses + wound care management

In 2015, a research team at Stanford built something different called SnapCap. SnapCap ran on Google Glass. The idea was exactly what it sounds like: a hands-free system that let wound care nurses photograph wounds, tag the images with patient data, and push everything directly into the medical record, all without touching a phone. They piloted it with actual wound care nurses at Stanford Hospital. It worked and nurses liked it but no one commercialized it.

A few years later, in 2018 and 2019, a team in Germany built a smart glass wound documentation prototype on Microsoft HoloLens. They ran it through a proper study: 45 healthcare workers with wound management experience, four hospitals, ethnographic fieldwork, the whole thing. What they found was that smart glasses let clinicians document wounds hands-free while they were actively treating them, which is the thing every wound care app has never managed to do. The nurses in the study preferred it. (Here's the companion paper from the same research group if you want to go deeper.)

Still, nobody shipped it.

Then in 2021, a software development company called Langate built an Epic-integrated smart glasses proof of concept designed specifically for nurses at the request of one of their clients (Langate is a software dev company). The idea was not wound care centered but instead pitched hands-free access to ALL patient data at the bedside, no phone required. They got it working and showed it to investors but then have not updated the masses on the product since.

Three separate teams across a decade pointed at the same gap and confirmed it was technically feasible but have zero commercial products for it. Meanwhile the wound care apps from Part 1 were being funded and built during this entire window. The idea was proven and since then, the hardware has goten better.

Meanwhile, the skilled trades industry has figured it out 🏗️

The skilled trades have a version of the same problem. An HVAC technician troubleshooting a rooftop unit at 100 degrees has both hands on the equipment. They can't hold their phone and look up fault codes at the same time. A master electrician with 30 years of knowledge is about to retire, and nobody has figured out how to get that knowledge out of their head and into a form that a junior tech can access on the job without calling them.

Sounds familiar doesn't it. 😬

Companies like RealWear and Vuzix have been building smart glasses for industrial frontline workers since the mid-2010s, and both have gone deep. RealWear ships devices that are ATEX-certified for use in explosive environments, has a thermal camera module, and has recently added AI-assisted onboarding and knowledge retention to its platform. Vuzix has been in the enterprise AR space just as long. These aren't proof of concepts sitting in a lab somewhere. They're deployed at Shell, BMW, Colgate-Palmolive, and 41 of the Fortune 100. The more recent wave, Carbyn and Zuper Glass, runs on consumer hardware like Meta Ray-Bans rather than ruggedized industrial headsets, which changes the adoption equation considerably for any setting where you can't show up looking like you're about to enter a refinery.

The reason the trades were able to get here is that the problem was clear and the incentive was real. Skilled trades are facing a workforce crisis: 2.1 million unfilled jobs, 192,000 workers retiring this year, decades of expertise walking out the door and not accessible to the next generation coming in. Smart glasses gave them a way to capture that knowledge and get it in front of junior workers in the field, hands-free, in real time.

The nursing version of this is: 1) not enough certified wound nurses, 2) patients waiting two to three days to be seen by a specialist, and 3) staging accuracy low enough that the data on it is genuinely concerning. They have the same structural problem and a hand-free solution while nurses today are still using a phone app.

Y Combinator's Fall 2025 Request for Startups called explicitly for AI guidance systems for physical workers using smart glasses, and named healthcare alongside HVAC and manufacturing. The market is converging. The hardware exists. The problem is understood. Nursing just wasn't the first vertical that got built for. What would it even look like when it actually ships for nursing? Where does it go from there? That's Part 3. *engagement seed planted* 🌱

I went to a hackathon and we tried to build the thing

Out-Of-Pocket ran their Hardware Edition hackathon in San Francisco in April 2026. It was one of my highlights of 2025 so I had to participate again this year. Our team had five people: two software engineers (one of them a two-time exited founder), a physical therapist turned healthcare executive, a healthcare operator with an MPH background, and me, a critical care nurse who brought the problem.

The problem I pitched the team was inpatient wound care assessment, specifically the version of it that doesn't make it into any app demo (an elbow wound). The cases I was thinking/complaining about are sacral pressure injuries on 300-pound incontinent patients with folds and necrotic tissue. Wounds where you can see fat. Wounds where you can see bone. Cases where you, a floor nurse, have to figure out what to do while repositioning a patient, managing lines, maintaining a clean field, and doing the math on whether to call the wound nurse now or wait until morning. That is the case nobody is building for, and it's the most common hard case in inpatient nursing.

The staging accuracy data makes this more concrete. According to an ACDIS clinical Q&A, wound care nurses at one hospital found that staff nurses correctly identified pressure injuries only about 60 percent of the time. An NCBI patient safety reference reports that fewer than half of nurses with under 20 years of experience felt confident consistently identifying all stages of pressure ulcers, and only about 30 percent of nurses with more than 20 years of experience did. That last number is the counterintuitive one: confidence actually goes down with experience, probably because experienced nurses know enough to understand how hard accurate staging actually is.

If you can't stage it, you can't treat it accurately right? That's the whole pickle.

We built Woond, a hands-free, AI-assisted wound assessment system on modded Meta Ray-Bans with 1) voice-activated photo capture from multiple angles, 2) ambient documentation running in the background, 3) Claude trained on wound care best practices from WOCN guidelines to generate real-time staging assessments and treatment recommendations through bone conduction audio. All so the nurse hears feedback like a thought, with no screen and no dirty-hand-clean-hand-phone-pickup problem.

The implementation works in two tiers. Tier 1 is AI-only: you take a picture, wait a couple of seconds, and it tells you the stage and what to do. That's the default. Tier 2 is for the cases where the AI's confidence drops below a set threshold, where it gives you the option to escalate to a remote CWOCN who can stream into exactly what you're seeing and give you real-time verbal guidance. No more waiting 2 to 3 days for a specialist to come IRL. One remote specialist could theoretically serve multiple hospitals from a single location. Whether that gets staffed internally by a hospital system or licensed out to a third party is an operational question for whoever takes this further.

We demoed it on an elbow, which is the least representative wound I could have chosen relative to what we were actually designing for, but we had limited presentation time so concessions had to be made. But it worked. I took a picture, the AI identified the stage, and gave me treatment guidance through the glasses speaker, hands-free, while my hands stayed on the patient.

What didn't work perfectly? The Bluetooth connection couldn't handle everything we were asking the glasses to do simultaneously: recording/chart audio intake, listening for voice commands, streaming to Claude, generating a treatment plan, and communicating the treatment plan. We had to factory reset between practice runs. The glasses also don't have LIDAR for depth measurement or infrared for temperature sensing, both of which would be a benefit clinically for staging deep tissue injuries and identifying infection.

While this was a hackathon proof of concept, held together in the way all hackathon builds are held together (with tape and prayers), it proved what it was trying to prove: the form factor works, the AI can stage and recommend treatment in real time, and the whole thing fits on your face.

People who hear about this build often ask about the broader augmented reality and mixed reality hardware landscape. Google Glass, Microsoft HoloLens, Apple Vision Pro. If we're already putting clinical AI on glasses for wound care, why not go further? Why not always-on AR for charting, vital signs, alerts, everything? It's a fair question and a genuinely different problem with different barriers that we'll get into in Part 3. The short version for now: what we built is task-specific. On for the wound assessment, off when you're done. That's a much lower bar than asking a nurse to wear a computing device for a 12-hour shift, and the lower bar is exactly why wound care is the right place to start.

Hospitals should pay for this because it's a revenue and liability problem

It wouldn't be very RN Forward thinking of me not to talk about the financial incentives around this product. 🤑

The CMS reimbursement rules create a very specific financial dynamic around pressure injuries. Stage III and IV hospital-acquired pressure injuries are classified as "never events". CMS will not reimburse hospitals for treating them, and hospitals absorb the full cost plus face additional penalties. A single stage IV pressure ulcer costs an average of $129,248 per admission to treat. For a 100-bed hospital, the annual cost burden from pressure injuries can exceed $1.8 million. There are more than 17,000 pressure ulcer-related lawsuits filed every year in the US, second only to wrongful death litigation.

The other side of that equation, a wound identified and accurately documented on admission can be billed for treatment. Once the wound is in the chart with the right stage and the documentation supports it was present before the hospital created it, the billing window opens. Debridement (CPT 97597), wound care consults, dressing supplies, prescription agents like Santyl (an enzymatic debridement medication that runs several hundred dollars per tube). All of that becomes billable instead of absorbed.

Early, accurate identification by the bedside nurse does two things at once: 1) it starts the billing clock for treatment and 2) it creates the documentation that separates a community-acquired wound from a hospital-acquired liability. Two to three days of missed identification isn't just suboptimal care. It's two to three days of unbillable treatment and a growing exposure to a never-event penalty if the wound progresses to stage III or IV.

The phone-based apps from Part 1 technically address this problem. Better imaging means better staging, which means cleaner documentation, which means cleaner claims. But if the nurse can't easily use the app because she literally can't put down what she's doing, the documentation still doesn't happen, making the form factor problem also a revenue problem.

⏱️ Before You Clock Out

👉 Has the engagement seed started to sprout? Stay tuned for Part 3: The Ethics, Privacy, and Practical Case for Smart Glasses in Nursing. Follow RN Forward on LinkedIn to be the first to know when it goes live..

👉 Did you know that more than 25% of the 105 health care companies that achieved valuations of more than US $1B between 2015 and 2024 had at least one founder who is a clinician? Check out this article to understand the value of innovation and venture exposure during clinical training.

👉 Out of Pocket Health throws rad healthcare events all over the US. Sign up for their newsletter to see when the next one near you is!